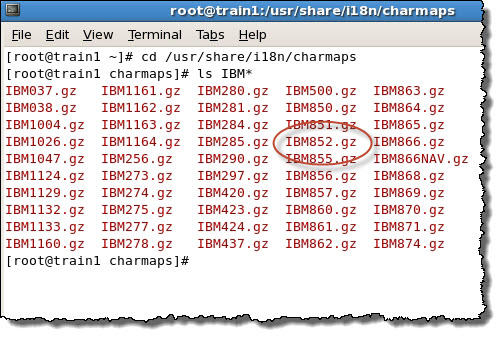

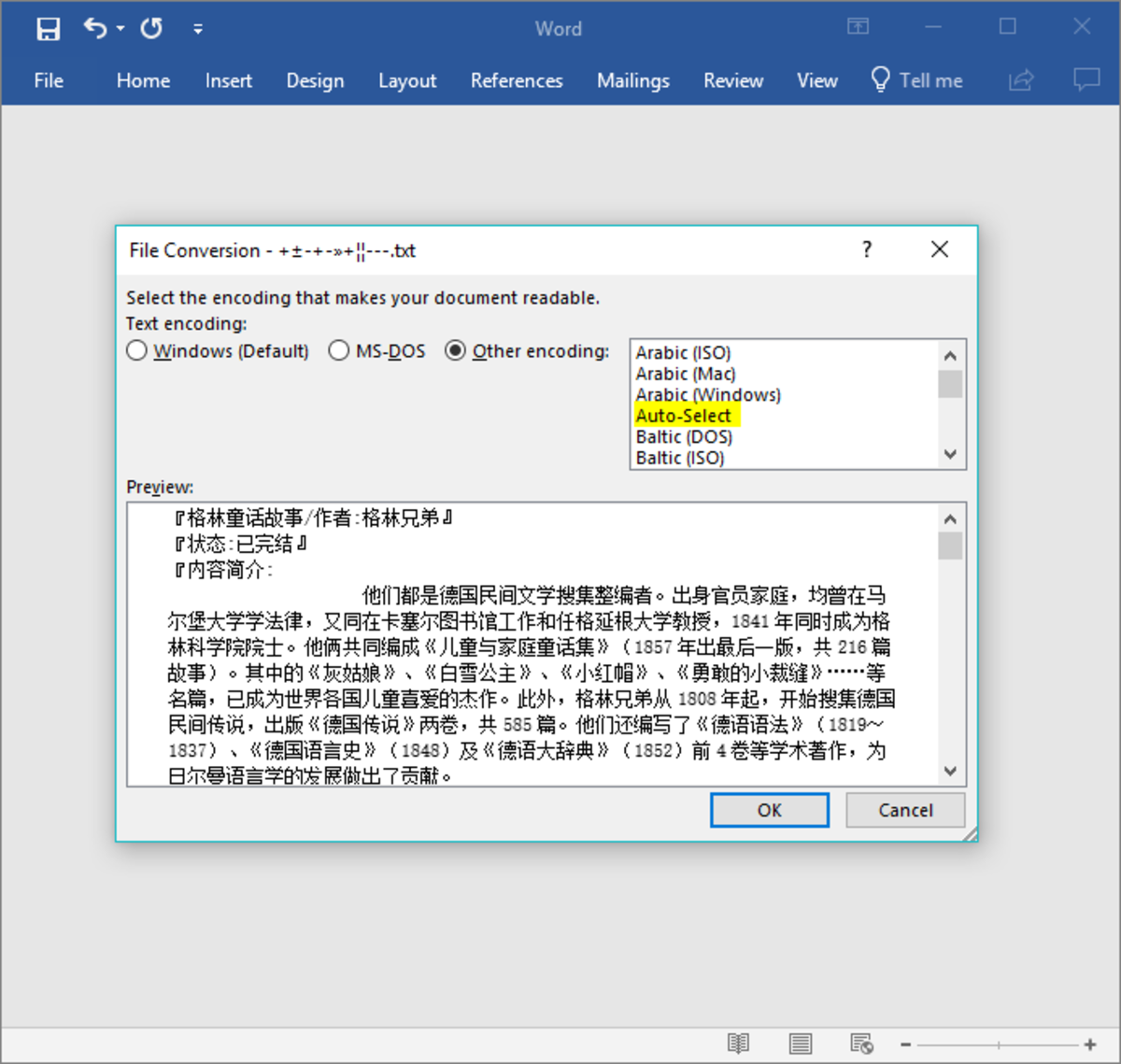

(Note that the latest HTML standard requires a “ISO-8859-1” declaration to be interpreted as Windows-1252.) Being Windows' default code page for English (and other popular languages like Spanish, Portuguese, German, and French), it's the most commonly encountered encoding other than UTF-8. If you've ruled out the UTF encodings, and don't have an encoding declaration or statistical detection that points to a different encoding, assume ISO-8859-1 or the closely related Windows-1252. I recommend trying Mozilla's charset detector or a. There are hundreds of other encodings, which require more effort to detect. In general, if you have a file format that contains an encoding declaration, then look for that declaration rather than trying to guess the encoding. If you need to support EBCDIC, also look for the equivalent sequence 4C 6F A7 94 93. If absent, then assume UTF-8, which is the default XML encoding. If your file starts with the bytes 3C 3F 78 6D 6C (i.e., the ASCII characters "

If you happen to have a file that consists mainly of ISO-8859-1 characters, having half of the file's bytes be 00 would also be a strong indicator of UTF-16. Note that the UTF-16LE BOM is found at the start of the UTF-32LE BOM, so check UTF-32 first. UTF-16īOM is FE FF (for BE) or FF FE (for LE). For a 24-byte sequence, it's less than 1 in a million. For a 12-byte sequence, it's less than 0.1%. For a 7-byte sequence, it's less than 1%. Specifically, given that the data is not ASCII, the false positive rate for a 2-byte sequence is only 3.9% (1920/49152). Lots of UTF-8 files don't have a BOM, especially if they originated on non-Windows systems.īut you can safely assume that if a file validates as UTF-8, it is UTF-8. ASCII can be easily identified by the lack of bytes in the 80-FF range. False positives are nearly impossible due to the rarity of 00 bytes in byte-oriented encodings. If the data has a length that's a multiple of 4, and follows one of these patterns, you can safely assume it's UTF-32. This is because the Unicode code point range is restricted to U+10FFFF, and thus UTF-32 units always have the pattern 00 00 (for LE). UTF-32īOM is 00 00 FE FF (for BE) or FF FE 00 00 (for LE).īut UTF-32 is easy to detect even without a BOM. There are, however, other ways to detect the encoding. And non-Unicode encodings don't even have a BOM. You can't depend on the file having a BOM. Is there a chart that shows which encoding matches those five first bytes? If (buffer = 0xef & buffer = 0xbb & buffer = 0xbf)Įlse if (buffer = 0xfe & buffer = 0xff)Įlse if (buffer = 0 & buffer = 0 & buffer = 0xfe & buffer = 0xff)Įlse if (buffer = 0x2b & buffer = 0x2f & buffer = 0x76)Įlse if (buffer = 0xFE & buffer = 0xFF)Įlse if (buffer = 0xFF & buffer = 0xFE)

*** Detect byte order mark if any - otherwise assume defaultįileStream file = new FileStream(srcFile, FileMode.Open) *** Use Default of Encoding.Default (Ansi CodePage) I try with this code to get the standard encoding public static Encoding GetFileEncoding(string srcFile) I try to detect which character encoding is used in my file.